FFA members who gathered for the wrapup session of the inaugural FFA Profit Plots program got a basic lesson in statistics. Joel Wahlman, superintendent at the Southeast Purdue Agricultural Center, who helped develop the FFA Profit Plots program, used plot results to explain why you can’t always make meaningful conclusions from a trial such as this.

The FFA members were from four chapters: Batesville FFA, South Decatur FFA, South Ripley FFA and North Decatur FFA. Members from each chapter cooked up a recipe for how corn should be grown in their plot. The goal was to see which chapter could make the most net profit. Batesville FFA won, but Wahlman wanted to make sure lessons went deeper than just who took home the traveling trophy.

Replication matters

All four recipes were planted in four different replications. “We also randomized in each replication,” Wahlman says. “Our goal was to introduce students to scientific testing so they would have a better idea of what we do at SEPAC.”

Results gave Wahlman and his assistant, Alex Helms, opportunities to demonstrate the value of research methods.

“In one of the four replications, the formula for one school was far better than any others in test,” Wahlman says. “We told them that if we had only planted that replication, we would have thought that treatment was by far the best. There wasn’t nearly that much difference in other replications.”

Significant difference

“Do we know if any of the yield averages from the four recipes are statistically different from the others?” Wahlman asks. “If we did the same test next year, could we expect the same results? Are results repeatable?”

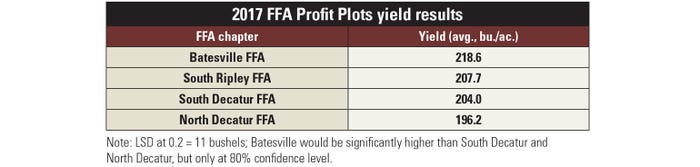

Wahlman defines “least significant difference,” or LSD, as how many bushels two treatments must vary by for someone to know they’re truly different. Otherwise, the difference could be due to experimental error.

“Scientists want to be at least 90% sure that one treatment is different than another,” Wahlman says. “That is a significance level of 0.1. When I analyzed yield results, I didn’t find differences at that level. There was too much background noise, meaning too many things like differences in soil type between the reps and other factors kept us from knowing what caused differences.”

Although a researcher wouldn’t go to 80% confidence level, or a significance level of 0.2, Wahlman did to make a point. The LSD was 11 bushels. “That means we could be 80% confident that the highest yield was due to the recipe, and that it should happen again. However, that’s not enough confidence to make good decisions.”

He concluded by telling students that if one yield wasn’t statistically different from another, it meant that the two treatments were the same. “As far as we can tell, the difference may have been due to experimental error. One might actually be as good as the other one.”

About the Author(s)

You May Also Like